Towards Real-Time DNN Inference on Mobile Platforms with Model Pruning and Compiler Optimization

applications

applicationsAbstract

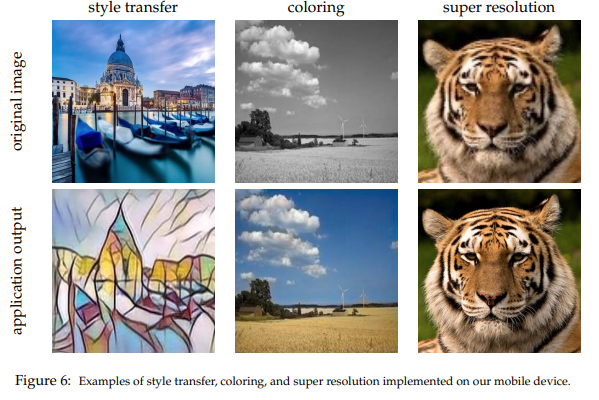

High-end mobile platforms rapidly serve as primary computing devices for a wide range of Deep Neural Network (DNN) applications. However, the constrained computation and storage resources on these devices still pose significant challenges for real-time DNN inference executions. To address this problem, we propose a set of hardware-friendly structured model pruning and compiler optimization techniques to accelerate DNN executions on mobile devices. This demo shows that these optimizations can enable real-time mobile execution of multiple DNN applications, including style transfer, DNN coloring and super resolution.

Type

Publication

In International Joint Conference on Artificial Intelligence-Pacific Rim International Conference on Artificial Intelligence 2020